Summary

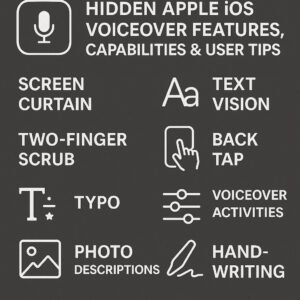

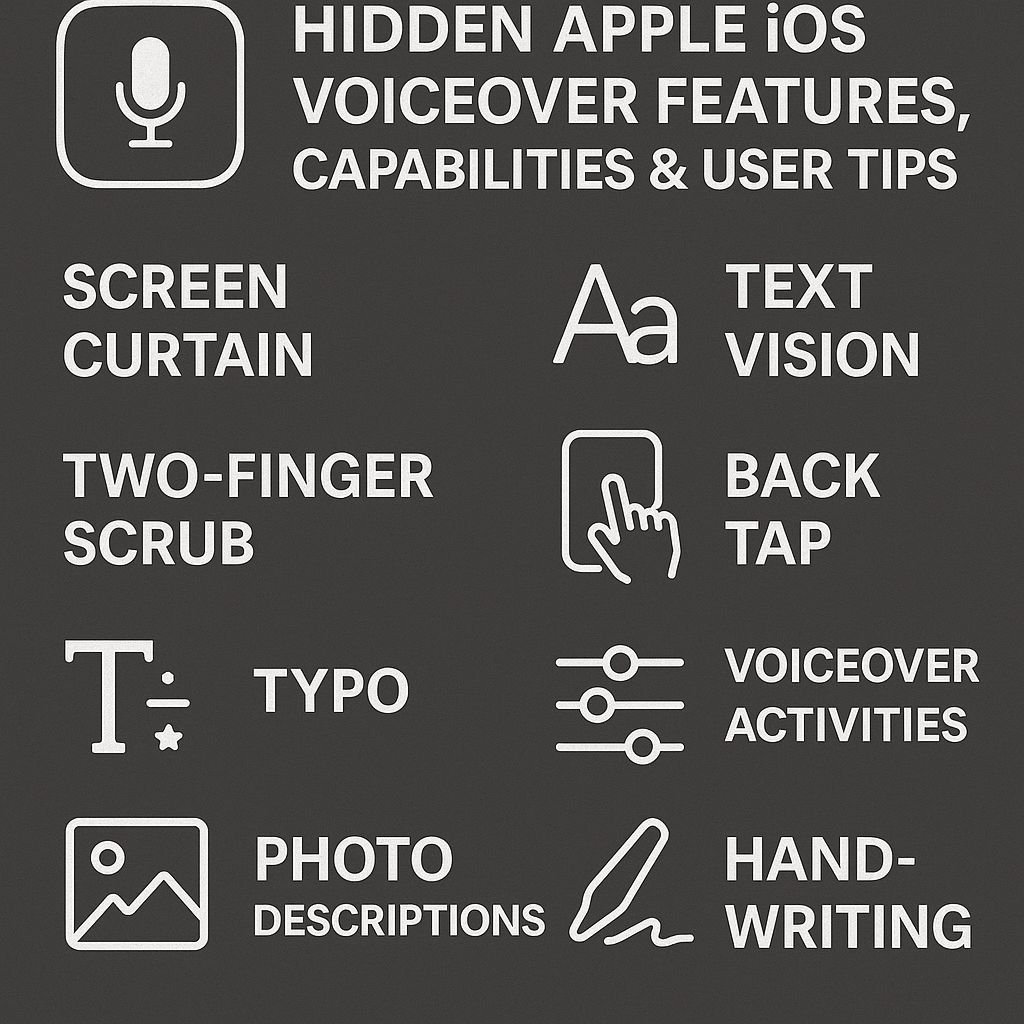

- VoiceOver has a variety of hidden gestures, such as the four-finger double tap for help mode and the “Magic Tap” for answering calls and controlling media playback

- The Rotor feature is a context-sensitive control center that allows users to navigate by various elements like headings, links, and even customize options for specific apps

- Screen Recognition uses artificial intelligence to identify unlabeled interface elements, making previously inaccessible apps usable for blind and low-vision users

- Custom VoiceOver settings can significantly improve efficiency, including adjustable speech rates, audio ducking options, and personalized pronunciation dictionaries

- The VoiceOver Practice area allows you to safely experiment with gestures without affecting your device, which is perfect for building confidence with the screen reader

VoiceOver changes the way visually impaired users interact with their Apple devices, but many of its most powerful capabilities are hidden. Accessibility experts at AccessTech Today have found that mastering these lesser-known features can significantly improve productivity and independence for blind and low-vision iPhone users. Beyond basic navigation gestures are sophisticated commands, customization options, and AI-powered tools that unlock the full potential of iOS devices.

There’s a lot more to VoiceOver than what you see when you first set it up. It has a number of hidden features that make it much more powerful. From special ways to navigate text to controls that change depending on what app you’re in, these features make iOS one of the most accessible platforms out there. Let’s take a look at some of the powerful tools you probably didn’t know you had at your disposal.

Overview

This article takes a deep dive into the VoiceOver screen reader from Apple, highlighting some of the features that even seasoned users might not know about. We’ll cover unique gestures, options for customization, recognition tools powered by artificial intelligence, and how VoiceOver works with other accessibility features on iOS. Whether you’re just starting out with VoiceOver or you’ve been using it for years and want to get more out of it, you’ll learn about features that make iOS more powerful and accessible than ever.

According to an accessibility specialist at AccessTech Today, “The difference between basic VoiceOver use and mastery often comes down to knowing these hidden features. Many of my clients experience dramatic improvements in their digital independence after learning just a few of these techniques.”

As we dive into these secret features, keep in mind that practice is key. Don’t be disheartened if some gestures feel strange at first – muscle memory develops with consistent use, and the VoiceOver Practice area (found in Settings > Accessibility > VoiceOver > VoiceOver Practice) provides a safe space to experiment without affecting your device.

Unleashing the Power of VoiceOver Gestures

There’s a lot more to VoiceOver than just simple taps and swipes. The screen reader includes a rich vocabulary of multi-finger gestures that provide powerful shortcuts for common tasks. These gestures work consistently across apps, giving you reliable tools regardless of what you’re doing on your device. Learning just a few of these commands can significantly improve your efficiency and reduce the number of steps needed to accomplish tasks.

Use Four Fingers to Double Tap for VoiceOver Help

One of the most underutilized features of VoiceOver is the built-in help mode. A swift double-tap with four fingers on any part of the screen will activate VoiceOver Help. This is a special mode where gestures are described instead of being performed. It lets you practice and learn gestures without the fear of accidentally triggering something you didn’t mean to. Just do any gesture while in help mode, and VoiceOver will tell you what that gesture usually does.

When you’re trying to remember how to do something or learning a new combination of gestures, this feature can be a lifesaver. If VoiceOver misinterprets your four-finger double-tap as a three-finger double-tap, you may hear “Speech off.” Just do a three-finger double-tap to turn VoiceOver speech back on. To exit help mode after you’re done experimenting, do another four-finger double-tap.

Effortlessly Navigate Documents with Three-Finger Swipes

Long documents, webpages, and emails can be a pain to navigate. But with three-finger swipes, it’s a breeze. Swiping up or down with three fingers lets you scroll through content while keeping your current reading position. This is like traditional scrolling, but it’s designed for screen reader users. It prevents the loss of focus that often happens with standard navigation methods.

Swiping left and right with three fingers is another important feature: it lets you navigate pages. When you swipe left with three fingers, you go to the next page in documents or views that have multiple pages, and when you swipe right, you go back to the last page. In apps like Books or when you’re reading an article on the web that has multiple pages, this gesture means you don’t have to find and click on next/previous buttons, which makes reading a lot easier.

The gestures are especially effective when used in conjunction with the reading controls that are accessible via the rotor. This lets you strike the perfect balance between automatic reading and manual navigation. For instance, you could use continuous reading (swiping down with two fingers) to have the text read out loud, then interrupt with a three-finger swipe when you need to move forward in the document.

Two-Finger Double Tap: The Magic Tap Command

The “Magic Tap” is probably the most useful VoiceOver gesture, which is a double tap with two fingers. This command is context-aware and performs the most appropriate action based on what you’re currently doing. If you’re receiving a call, Magic Tap will answer the call; if you’re already on a call, it will end it. If you’re listening to music or podcasts, it will play or pause without you having to find the controls on the screen.

Use Three Fingers to Triple Tap for Text Selection Controls

If you’re editing text, you can triple tap with three fingers to activate text selection mode. This gives you precise control over what content is highlighted. After you perform this gesture, VoiceOver will announce that text selection has started. You can then adjust the selection boundaries by dragging a single finger. This feature is especially useful when you’re writing emails, messages, or documents. It allows visually impaired users to edit with the same precision as users who can see.

Personalizing VoiceOver for a Tailor-Made Experience

VoiceOver is more than just a screen reader. It’s a customizable tool that can be adapted to suit your individual needs and preferences. Whether you want to adjust the speech rate, change the feedback settings, or anything in between, VoiceOver gives you the power to create a personalized experience that fits your unique needs and usage patterns.

There are a lot of settings that you can easily access through VoiceOver Quick Settings. To access this feature, you just need to do a two-finger quadruple-tap. This way, you don’t have to go through the hassle of navigating through the Settings app. You can customize the specific parameters that are available in Quick Settings. Just go to Settings > Accessibility > VoiceOver > Quick Settings and prioritize the adjustments you make most frequently.

Discovering the perfect mix of these settings can greatly enhance both ease of use and auditory comfort, particularly during long iPhone usage sessions. Let’s delve into some of the most influential customization options that often go unnoticed. For those interested in maintaining overall well-being, consider exploring effective weight management tips to complement your tech habits.

Managing Audio Ducking

Audio ducking is a feature that automatically reduces the volume of other audio sources when VoiceOver is speaking. This is a great feature to have when you’re listening to music, videos, or podcasts and don’t want to miss any important VoiceOver announcements. You can manage this feature by going to Settings > Accessibility > VoiceOver > Audio. There, you’ll have the option to duck all audio or just other media playback.

Changing the Speed and Tone of VoiceOver

While the standard speed of VoiceOver is fine for most, you can increase your productivity by adjusting this setting. Many experienced users slowly increase the speed of VoiceOver as they become more comfortable with it, and eventually understand speech at speeds that would be incomprehensible to beginners. You can change the speed of speech through the rotor by selecting “Speaking Rate” and swiping up or down, or through VoiceOver settings for more permanent changes.

Other than speed, you can personalize the pitch and intonation of the voice to make it easier to understand and to prevent your ears from getting tired. VoiceOver gives you several voice choices with different tones so you can choose the ones that sound best and are easiest for you to understand. Some people like to use lower voices when they’re reading a lot, while higher voices might be better when there’s a lot of background noise. You can change these settings by going to Settings > Accessibility > VoiceOver > Speech.

Personalised Pronunciation Dictionaries

Many people don’t know that VoiceOver has a feature that lets you create personalised pronunciations for words, names, or abbreviations that it consistently gets wrong. This feature can be found under Settings > Accessibility > VoiceOver > Speech > Pronunciations and it lets you tell VoiceOver exactly how to say certain text. This is really useful for technical terms, brand names, or contact names that VoiceOver has trouble with.

The pronunciation dictionary can handle both phonetic spellings and replacement words. Let’s say VoiceOver is having a hard time pronouncing your co-worker’s name, “Siobhan.” You can create an entry that replaces it with the phonetic spelling, “Shi-vawn.” You might also want acronyms like “iOS” to be read as individual letters instead of as a word. You can specify that in your custom dictionary.

How to Adjust Verbosity Settings for Different Situations

The verbosity controls in VoiceOver give you the power to choose how much information is provided to you and how efficiently it is delivered. You can find these controls in Settings > Accessibility > VoiceOver > Verbosity. They allow you to decide exactly how much information VoiceOver gives you in different situations. For example, you can choose if VoiceOver tells you when the screen dims or wakes up, if it reads out hints after saying the names of elements, and how it deals with changes in punctuation and formatting.

Experienced users usually adjust the verbosity to suit the task at hand. For example, if you’re reading an article, you might want to have as much formatting information as possible to understand the layout of the document. But if you’re just casually browsing through apps, you might prefer to have minimal feedback. The punctuation settings are especially useful for people who write or edit text. You can set up VoiceOver to speak every punctuation mark when you’re reviewing text.

Using Rotor: A Hidden Gem for VoiceOver Pros

The VoiceOver rotor is one of the most potent and yet least used features of the entire accessibility suite. This virtual control, which is activated by twisting two fingers on the screen as if turning a dial, provides contextual navigation options that change the way you interact with content. The rotor changes to match what you’re doing, offering relevant navigation methods whether you’re browsing a webpage, writing an email, or editing a spreadsheet.

Many people have found out about the rotor’s basic functions, such as changing the speech rate or navigating by headings, but not many people have discovered its full potential to improve navigation efficiency. The rotor can be customized a lot, so you only include the options you use regularly, which stops you from having to go through options that aren’t relevant to you. You can customize it in Settings > Accessibility > VoiceOver > Rotor.

Getting to grips with the rotor’s context sensitivity is crucial if you want to become proficient in VoiceOver. The options it presents will vary depending on the app you’re using and the content you’re dealing with, offering specific navigation tools exactly when you need them. Let’s delve into some of the rotor’s most impressive features that frequently go unnoticed.

Accessing and Setting Up Rotor Options

To access the rotor, you just need to use two fingers to make a rotating gesture on the screen, as if you were turning a dial. Put two fingers on the screen a little bit apart and rotate them clockwise or counterclockwise to go through the available options. After you’ve chosen a rotor setting, you can navigate with that setting by swiping up or down with one finger instead of the usual element-by-element navigation.

It’s important to tailor your rotor options to suit your needs for the sake of productivity. In Settings > Accessibility > VoiceOver > Rotor, you’ll see a host of potential options to include, from basic navigation aids like headings and links to specialized tools like text attributes and language selection. By handpicking only the options you use frequently, you can optimize the rotor experience and avoid wasting time scrolling through seldom-used choices.

You’ll really see the rotor shine when you realize that different apps can have different rotor setups. A news reading app might benefit from heading and paragraph navigation, while a music app might be better served with custom actions and buttons. Play around with different rotor setups for your most-used apps to find the best setup for you. For additional tips on managing your apps effectively, check out these top tips for weight management.

Letter-by-Letter Navigation

When editing text, the rotor becomes a precision tool that allows you to navigate and edit at the exact level of detail you need. Set the rotor to “Characters” to move through text one character at a time using up and down swipes, which is great for catching typos or navigating complex passwords. The rotor can also be set to “Words” or “Lines” for increasingly broader navigation, giving you full control over text review and editing.

Character navigation is especially helpful when you’re proofreading text. It makes VoiceOver read out each character one by one, including spaces and punctuation. This can help you catch small mistakes that you might miss when you’re reading by words or sentences. When you use character navigation with text selection gestures, it gives you the same level of control over editing that sighted people have.

Speedy Navigation Among Headings, Links, and Form Controls

Web browsing is made much easier when you use the rotor to navigate by specific element types. Set the rotor to “Headings” to quickly jump from one section title to another on a webpage, “Links” to review all clickable elements, or “Form Controls” to find text fields and buttons. These navigation methods turn what could be a slow linear process into a fast, structured exploration experience. This approach is similar to how sighted users scan pages visually, jumping from one important element to another rather than processing everything in order.

App-Specific Rotor Actions

Several apps have custom actions that can be found in the rotor, which offer shortcuts to app-specific features without having to find buttons on the screen. For instance, in Mail, you may see actions for archiving, flagging, or deleting messages; in social media apps, you may find options for liking, sharing, or saving content. When VoiceOver senses that custom actions are available, it will say “Actions available” when focusing on compatible elements.

Custom actions are often missed, but they can significantly speed up routine tasks within apps. When VoiceOver tells you that actions are available, change the rotor to “Actions” and swipe up or down to choose from the options available, then double-tap to activate. This method often allows for quicker access to functions than going through the app’s visual interface, saving a lot of time and effort.

Screen Recognition: VoiceOver’s AI Assistant

Screen Recognition is one of the most revolutionary advancements in VoiceOver in recent years, using artificial intelligence to make previously inaccessible apps usable. This feature analyzes on-screen content to identify unlabeled buttons, images, text fields and other interface elements that developers haven’t properly coded for accessibility. By essentially “seeing” what’s on screen, VoiceOver can provide descriptions and interactive elements even when apps lack proper accessibility tags.

Screen Recognition, which was first introduced in iOS 14, continues to get better with each iOS update, becoming more and more accurate at identifying complex interface elements. This technology works in the background to supplement traditional accessibility information, filling in the gaps where developers have not implemented proper accessibility features. For many users, this feature has turned completely unusable apps into functional, if not perfect, experiences.

Screen Recognition really shines when you’re using apps from developers who haven’t made accessibility a priority. Banking apps, food delivery services, and niche industry apps that were once impossible to navigate can become usable with this feature turned on. While it’s not perfect, Screen Recognition often provides enough information to make key features accessible.

“Screen Recognition has been a game changer for many of our clients. Apps they previously needed help from someone who can see to use suddenly became independently accessible. It’s not perfect, but it’s opened doors that were completely closed before.” – iOS Accessibility Trainer

How to Turn On Screen Recognition

To turn on Screen Recognition, go to Settings > Accessibility > VoiceOver > VoiceOver Recognition > Screen Recognition and switch the feature on. The first time you turn it on, your device will download the necessary machine learning models, which may take a few moments depending on your internet speed. Once it’s on, the feature works automatically in the background, supplementing accessibility information across all your apps without needing further setup. For more detailed guidance, you can refer to the Apple Support page on VoiceOver.

Apps That Get the Most Out of Screen Recognition

- Food delivery and restaurant apps that use a lot of images

- Banking and finance apps that use complex custom controls

- Social media platforms that change their interfaces often

- Shopping apps that use visual product galleries

- Transportation and rideshare services that use maps and interactive elements

Screen Recognition doesn’t take the place of developers making their apps accessible, but it does help when apps don’t meet accessibility standards. The feature works best on apps that use the standard iOS interface, where it can easily identify common controls and patterns. Apps that use unusual or heavily customized interfaces might still be a challenge, but even partial recognition often gives enough information to make apps that were previously unusable at least somewhat usable.

Recent iOS updates have improved the performance of Screen Recognition, addressing initial worries about its effect on battery life and device responsiveness. The feature now runs more smoothly and has a minimal effect on the overall performance of the device, even on older iPhone models. This improvement means that Screen Recognition can be used regularly, rather than only when it’s absolutely necessary.

When the VoiceOver identifies elements that wouldn’t normally be accessible, it treats them as standard interactive controls. You can navigate to these identified elements using standard VoiceOver gestures and interact with them through double-taps just like properly coded buttons or fields. In many cases, users won’t even realize they’re interacting with content that lacks proper accessibility coding – the experience feels seamless. For more information on using VoiceOver, visit this guide on using VoiceOver in apps.

Although Screen Recognition may not always identify elements correctly or may overlook subtle interactive elements, it improves with each iOS update as Apple fine-tunes the underlying machine learning models. To get the most out of these continuous improvements in recognition accuracy and performance, make sure your device is always updated to the latest iOS version.

Drawbacks and Solutions

Screen Recognition, while impressive, isn’t flawless. It occasionally misidentifies elements, gives incomplete labels, or doesn’t recognize highly customized controls. When you run into these drawbacks, pairing Screen Recognition with other recognition features of VoiceOver like Text Recognition or Image Descriptions usually gives better outcomes. For apps you use a lot, making custom labels for problematic elements (by turning on an item and using the “Label” option in the rotor) can permanently enhance the experience beyond what automatic recognition provides.

How Siri and VoiceOver Work Together

Apple’s Siri and VoiceOver features are designed to work together to provide an enhanced user experience. While many people use these features separately, when used together, they offer a unique set of capabilities that make it easier to interact with your device. When VoiceOver is turned on, Siri provides more detailed responses than it usually does when displaying information visually. This ensures that users who are blind or visually impaired get the full context of the information.

Using Voice Commands to Adjust VoiceOver Settings

You can ask Siri to turn VoiceOver on or off by simply saying “Turn VoiceOver on” or “Turn VoiceOver off.” This is a great feature for when you need to quickly turn on accessibility features without having to go through all the settings. You can also ask Siri to adjust VoiceOver settings by saying things like “Slow down VoiceOver speech” or “Increase VoiceOver volume,” giving you hands-free control over your screen reader experience.

Using VoiceOver to Get More Detailed Responses

When you use VoiceOver, Siri automatically gives you more detailed responses than it would for people who can see. For instance, if you ask Siri about the weather, it might read out all the details of the forecast instead of showing a visual summary. This extra detail makes sure you get all the information you need without having to go to more screens. This shows how well Apple has thought about making things work smoothly for people who can’t see.

When VoiceOver is turned on, Siri can describe images, combining the power of visual intelligence with the capabilities of a voice assistant. If you ask Siri, “What’s in this image?” while looking at a photo, you’ll get a detailed description that goes beyond simple image recognition. This makes visual content more accessible without the need for specialized photography apps.

Recognizing Text and Live Descriptions

Apple has added potent machine learning capabilities to VoiceOver that can recognize and interpret visual data in real-time. These features change the way blind users interact with digital content and the physical world, offering unprecedented access to data that was previously inaccessible without the help of someone who can see.

Finding and Reading Text in Images

VoiceOver’s Text Recognition feature can automatically find text in images, photos, and even handwritten notes. When you move to an image that has text, VoiceOver tells you there’s text there and lets you interact with it like it’s regular digital text. This feature works with photos in your library, images on websites, and even documents you take pictures of with the camera. The technology can recognize many languages and different types of text, making it very versatile for use in the real world.

Text Recognition is especially helpful when dealing with inaccessible PDFs, photographed documents, or physical world signage. For students and professionals, this feature turns previously inaccessible materials into readable content without needing sighted help. The feature continues to get better with each iOS update, handling increasingly complex text layouts and challenging handwriting styles. To learn more about using this feature, you can refer to the VoiceOver guide on Apple’s support site.

Get Image Descriptions Without Leaving Your App

VoiceOver can describe images across iOS, giving you context about visual content without interrupting your work. When you come across images in apps, websites, or messages, VoiceOver can look at the content and provide descriptions that range from basic scene recognition to detailed descriptions of complex images. This feature works automatically for many images, but you can also manually trigger it by selecting an image and using the “Describe Image” action from the rotor.

The success of image descriptions depends on the complexity of the image, but the system continues to get better with every iOS update. Simple images like logos or everyday items get very accurate descriptions, while more complicated scenes might get more general categorizations. For important images where automatic descriptions aren’t enough, users can ask for more detailed analysis through the rotor actions menu.

How to Use People Detection and Door Detection

VoiceOver does more than just read text and describe images. It also includes special features that can help you navigate your environment. With People Detection, which is available in the Magnifier app, you can use your iPhone’s camera to find out if there are people near you and how far away they are. This can be very helpful when you’re in a public place and you want to keep a safe distance from others or find your way through a crowd.

Another unique recognition tool, Door Detection, can identify doors and provide details about their type, distance, and whether they are open or closed. It can often recognize door numbers and signs, making it easier to find specific rooms or entrances. These specialized tools, combined with VoiceOver’s general image and text recognition capabilities, form a complete suite of visual assistance features that enhance spatial awareness and independence.

Enhanced Braille Support for Visually Impaired Users

VoiceOver delivers extensive braille support, providing both on-screen braille input and compatibility with external braille displays. These features make iOS devices fully accessible to deaf-blind users and those who prefer tactile interaction over audio feedback. The braille implementation in iOS is among the most comprehensive available on any mobile platform, supporting multiple braille codes and input methods.

Linking Braille Displays

iOS is compatible with a variety of refreshable braille displays that can be connected via Bluetooth or USB (with an adapter). To connect a display, you usually need to go to Settings > Accessibility > VoiceOver > Braille and select “Choose a Braille Display.” Once you’ve linked the display, it will receive text from VoiceOver and its keys can be used to navigate iOS. The system automatically recognizes many common display models, which makes it easier to connect them and ensures they work with devices from leading manufacturers like HumanWare, Freedom Scientific, and APH.

Braille Input and Output Features

VoiceOver allows you to interact with braille in two ways: through a physical braille display and the on-screen braille keyboard. If you are using a physical display, any text that VoiceOver speaks is automatically sent to the display in your chosen braille code. The navigation keys on the display can also be used to control iOS, so you can operate your device without touching the screen at all.

Here are some of the braille features:

- Braille output is available in contracted or uncontracted form, and in multiple languages

- Braille codes like Nemeth Code for mathematics are supported

- Braille display timeout settings can be customized to save battery

- Status information can be shown or hidden on the braille display

- Different braille codes can be automatically translated

For those who don’t have a physical display, iOS has a braille keyboard that shows up when needed. This virtual keyboard supports both six-dot and eight-dot braille input, which lets users type in braille instead of using the standard keyboard. The keyboard can be set to input mode (where each dot is tapped individually) or screen away mode (where you hold the device with the screen facing away and tap dots as if using a physical braille writer). For more detailed guidance, check out this beginner’s guide to using iOS VoiceOver.

Braille Screen Input is a feature that enables experienced braille users to type in an efficient and fast manner, often faster than using the standard keyboard. It translates braille input into standard text automatically, making it compatible with all iOS applications such as email, messages, and document editing apps. For users who are literate in braille, this input method allows them to use the muscle memory they’ve developed over years of braille writing. For more details on how to use VoiceOver in apps, visit the Apple support page.

Braille output formatting can be customized by advanced users through settings that control aspects like word wrapping, blank line insertion, and status cell placement. These customization options allow users to optimize the braille experience according to their preferences and the capabilities of their specific braille display. The system remembers separate settings for different connected displays, automatically switching configurations when changing between devices.

Braille Settings: Contracted vs. Uncontracted

Apple’s iOS offers options for contracted (Grade 2) and uncontracted (Grade 1) braille, giving users the ability to choose the reading format that suits them best. Contracted braille uses abbreviations and contractions to present text in a more compact form, making it quicker to read for those who are familiar with the system. Uncontracted braille, on the other hand, uses a single cell to represent each letter, which is simpler for those who are new to braille but requires more space and time to read.

Users have the ability to choose between contracted and uncontracted for both input and output. The user can switch between modes via VoiceOver settings or directly from the braille keyboard for input. Output translation settings can be set independently of input settings, providing the flexibility to type in uncontracted while reading in contracted. This flexibility allows users with varying levels of braille proficiency and different preferences for reading versus writing to use the system more effectively.

How to Create and Use Custom VoiceOver Commands

With VoiceOver, you can do more than just adjust settings. You can create custom commands that are tailored to your specific needs. This includes custom gestures, keyboard commands, and automation integrations. By doing this, you can create an accessibility experience that is personalized to you. This can help to optimize the tasks you do most often. It can also significantly improve efficiency by reducing the number of steps you need to take for common actions.

How to Set Up Custom Touch Gestures

With iOS, you can assign specific actions to custom VoiceOver gesture patterns. To do this, go to Settings > Accessibility > VoiceOver > Commands > Touch Gestures. There, you can create mappings for gestures like the two-finger scrub, three-finger tap, or four-finger swipe. You can link these gestures to functions such as reading the time, activating Siri, or toggling the screen curtain. This feature lets you prioritize your most-used functions and make them accessible through memorable gesture shortcuts instead of having to navigate through menus.

Setting Up Physical Buttons for Actions

Not only can you use touch gestures with VoiceOver, but you can also assign accessibility functions to hardware buttons, such as volume controls. This allows you to interact with your device physically without having to touch the screen. This is especially useful when you’re in a situation where it’s hard to interact with your device using touch, like when you’re wearing gloves or when your hands are busy. You can set up physical button assignments by going to Settings > Accessibility > VoiceOver > Commands > Physical Buttons.

These hardware tweaks are particularly useful for people with both visual and motor disabilities, providing alternative ways to interact that don’t solely rely on precise touch gestures. For instance, setting the volume up button to read notifications and the volume down button to pause speech creates a simple physical interface for controlling the flow of information without needing to interact with the screen.

How to Use the Shortcuts App with VoiceOver

Apple’s iOS Shortcuts app provides robust automation features that work flawlessly with VoiceOver. You can create custom shortcuts for multi-step tasks, which allows you to simplify complex sequences into a single command. This command can be activated through Siri or the Shortcuts widget. If you use VoiceOver, you can create shortcuts for actions such as formatting and sharing text, downloading and processing media, or managing smart home devices. You can then activate these automations using voice commands or touch. For more guidance, you can explore this guide on using VoiceOver in apps.

Users can assign shortcuts to VoiceOver gestures via the custom commands menu. This allows you to create powerful combinations that extend the native capabilities of VoiceOver. For instance, you could create a shortcut that pulls all the links from a webpage, formats them in a readable list, and copies them to your clipboard. You could then assign this entire process to a custom four-finger double-tap. This allows you to create sophisticated workflows that would normally require multiple apps and dozens of gestures.

Unlocking VoiceOver’s Full Potential Makes iOS a Game-Changer

Mastering VoiceOver’s hidden features transforms the iOS experience from merely accessible to truly empowering. By combining the specialized gestures, recognition capabilities, and customization options explored in this guide, visually impaired users can achieve levels of independence and efficiency that rival or exceed sighted operation. The depth and thoughtfulness of Apple’s accessibility implementation demonstrates their commitment to universal design principles that benefit all users. As you incorporate these advanced techniques into your daily workflow, you’ll discover that iOS becomes increasingly intuitive and powerful, adapting to your needs rather than requiring you to adapt to its limitations.

Common Questions

We’ve collected some frequently asked questions that come up as users dive deeper into VoiceOver’s advanced features. The answers to these questions can help solve problems and make your experience with iOS’s accessibility features even better.

What’s the fastest way to turn VoiceOver on and off?

If you need to frequently switch VoiceOver on and off, the quickest way is to use the Accessibility Shortcut. You can set this up by going to Settings > Accessibility > Accessibility Shortcut and choosing VoiceOver from the list. Once this is set, you can toggle VoiceOver on and off by triple-clicking the side button (on Face ID devices) or the home button (on Touch ID devices). If you have a device with Face ID, you can also add the Accessibility Shortcut to the Control Center for even quicker access, bypassing the need to go into Settings.

Does VoiceOver work with third-party keyboards?

Yes, VoiceOver is compatible with most third-party keyboards, although the degree of compatibility and user experience can vary depending on the developer. Standard typing features such as autocorrect and word prediction usually work with VoiceOver, but specialized keyboard features such as swipe typing may have limited accessibility. To get the best results, try out different keyboard options and see which one best matches your typing preferences and VoiceOver compatibility.

Is VoiceOver compatible with all iOS apps?

Not all apps are compatible with VoiceOver. Apps that have been developed in accordance with Apple’s accessibility guidelines usually have great VoiceOver integration. They have buttons that are properly labeled, a logical order of navigation, and custom controls that are accessible. However, many third-party apps, especially those from smaller developers, may have accessibility implementation that is incomplete, buttons that are not labeled, or features that are not accessible.

Apple’s native apps usually provide excellent VoiceOver support, setting the bar for accessibility. While major apps from big companies usually provide good accessibility, the quality can vary from update to update. The App Store currently doesn’t offer accessibility ratings, so finding accessible apps often involves trial and error or recommendations from the VoiceOver user community.

For applications that lack built-in accessibility, iOS 14 and subsequent versions provide Screen Recognition (mentioned earlier in this article). This feature can greatly enhance usability by identifying elements that are not labeled. While it’s not a perfect solution, this feature often makes previously inaccessible applications at least somewhat usable without requiring updates from the developer.

“We encourage users to provide feedback to developers about accessibility issues. Many developers are simply unaware of accessibility needs rather than deliberately excluding blind users. Clear, constructive feedback often leads to improved accessibility in future updates.” — AccessTech Today

How can I practice VoiceOver gestures without affecting my iPhone?

The VoiceOver Practice area provides a safe environment to learn and experiment with gestures without triggering actual device functions. Access this feature through Settings > Accessibility > VoiceOver > VoiceOver Practice. In this mode, VoiceOver announces what each gesture would do without actually performing the associated action, allowing you to build muscle memory and confidence with complex gesture combinations.

Another useful way to practice is to turn on VoiceOver through the Accessibility Shortcut (triple-click the side or home button) while you’re in the Settings app. This way, you can quickly turn on VoiceOver to practice, and then turn it off immediately if you get confused, without messing up your normal device setup. A lot of users find this method useful when they’re learning new gestures or checking out features they’re not familiar with.

How do VoiceOver and Speak Screen features differ?

VoiceOver and Speak Screen are designed to meet different accessibility requirements and function in completely different ways. VoiceOver is a full-fledged screen reader that entirely alters the way you interact with your device, substituting the standard touch interface with unique gestures intended for non-visual use. It reads elements as you navigate them, giving you control over exactly what content you hear and when you hear it. VoiceOver is intended for users who primarily interact with their devices in a non-visual manner.

Speak Screen, which can be activated by swiping down from the top of the screen with two fingers, reads all the content currently displayed on the screen without altering the touch interface. This feature is particularly useful for users with mild to moderate visual impairments or reading difficulties who still primarily navigate visually but would like audio support for reading longer content. Speak Screen keeps the standard iOS interaction model while providing audio output.

VoiceOver offers the in-depth accessibility that most blind users need to fully control their device, while Speak Screen is a handy tool for certain reading situations or may be the preferred choice for low-vision users who still rely somewhat on visual navigation. Knowing the differences can help you choose the right tool for different situations, and some users switch between these options depending on what they are doing and where they are.

If you need help with iOS accessibility features including VoiceOver, check out AccessTech Today. Our accessibility experts offer personalized training and troubleshooting to ensure you’re making the most of your Apple devices. Additionally, if you’re interested in preventing illness through vaccination, our team can provide guidance on how to maintain your health alongside your tech usage.